Yet while Ada was lucky in the education she received, she has scarcely more ground for optimism than any other intellectually enthusiastic women of her day as regards finding an outlet for her mental energies after her education was completed.

…

For a woman of Ada’s day and social class who wished to lead a mentally fulfilling life, the opportunities were close to non-existent. There was generally little alternative but to marry, produce children, and live for one’s husband.

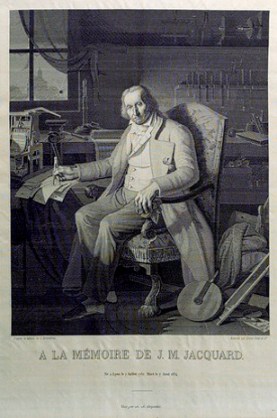

Jacquard’s Web, p. 126.

The Ada above is Ada Byron, the only legitimate child of Lord Byron, later known as Ada Lovelace (after marrying a man who later became the Earl of Lovelace). If you don’t know the name Ada Lovelace, you should. A close friend of Charles Babbage, who is sometimes referred to as the father of computers, she was inspirational and influential in the development and the spreading of Babbage’s Analytical Engine, which was the conceptual framework for the eventual practical creation of computers.

Over 100 years later.

The reasons why the development of computers happened closer to 1950 than 1850 is in part due to Babbage’s poor diplomatic and interpersonal skills, but also to politics of 1840’s England (Sir Robert Peel, then the Prime Minister of the United Kingdom, had a part in this, being the miser who refused Babbage’s funding in 1842). Ada Lovelace, a woman of enthusiasm and wonderful ability to explain the concepts that Babbage ingeniously foresaw, was not able to be the spokesperson nor the valuable colleague that she might have been had Babbage’s stubbornness not been so potent.

The ‘Interpretor’

Many men in academia and technology may have some traditional precedent, with Charles Babbage, in treating women collaborators as mere assistants or, in this case ‘interpreters,’ but at least there is no evidence or reason to believe that Babbage sexually harassed Lovelace (although it would not be impossible that they were lovers, although there is little evidence of this as well). Babbage was not especially misogynistic or awful, he was actually generally liked as far as I can tell, especially by Ada herself. But this was the 19th century, and misogyny was simply a stark truth about the European world.

Suffice it to say, Ada Lovelace may have had a much more profound influence on the earlier development of information technology had Babbage’s stubbornness and selfishness not been so debilitating to his obvious intellect. Perhaps there is a lesson in there for all intelligent and yet stubbornly selfish and short-sighted men in the various places where skepticism and technology reign.

But more universally, there is a lesson for all of us. Our intelligence, even if great, is often insufficient. We need more than mere processing power and memory to be wise, and perhaps it is wisdom which we should seek in addition to intellect. In too many cases, we see people with obvious intelligence (and memory, especially with everything logged online), but not as often do we see actual wisdom, perspective, or a willingness to challenge oneself. Had a man like Babbage been more wise, we might have had computers by 1900 rather than 1950. Further, the name Ada Lovelace may be remembered for much more than a mere interpreter or (dare I say) cheerleader for Babbage’s work, but as a fully recognized pioneer in information technology of which she was more than capable.

Notes of the ‘Interpreter’

I made reference, above, to Charles Babbage shrugging Ada Lovelace off, despite their very close friendship and collaboration, as a mere ‘interpreter’ of his work. This flippant title was bestowed upon her due to her translation of a paper about Babbage’s Analytical Engine by an Italian mathematician, Luigi Menabrea (Lovelace’s translation can be found here). But in addition to translating the paper, she added 20,000 words or so of ‘Notes’ which give more detail and depth to Babbage’s ideas.

A new, a vast, and a powerful language is developed for the future use of analysis, in which to wield its truths so that these may become of more speedy and accurate practical application for the purposes of mankind than the means hitherto in our possession have rendered possible. Thus not only the mental and the material, but the theoretical and the practical in the mathematical world, are brought into more intimate and effective connexion with each other. We are not aware of its being on record that anything partaking in the nature of what is so well designated the Analytical Engine has been hitherto proposed, or even thought of, as a practical possibility, any more than the idea of a thinking or of a reasoning machine.

The “idea of a thinking or a reasoning machine.” This was written in 1843, but the fundamental ideas were older than that, possibly tracing back as far as the night when Babbage conceived of the idea of the Analytical Engine in December of 1834 when he explained the idea to three women, one of which was a 17 year old Ada.

Mind and Machine (skip this section if philosophy annoys you)

I have been thinking a lot recently about the so-called mind-body problem. I remember when I first was exposed to this philosophical problem, when I started reading philosophy around age 16 or so. I also remember, when I got to college, being surprised that people still thought it was a problem. I remember listening to people, usually Christians, that would defend a form of dualism, or at least of perceived difference, between their personal subjective experience and the seeming objectivity of the matter that was their brain. For many people, there really seems to be a disconnect here. I honestly don’t get it.

The idea that my subjective experience simply is what it is (like) to be my brain (well, my whole body really, but mostly my brain) seems intuitive to me. I don’t feel the disconnect between subjective experience and an objective (projected, really) external reality of ‘my brain.’ I recognize that the illusion is that separation, not either of the sides of the proposed dualism (I’m getting overly philosophical, I know).

This is why I understand idealists sometimes, I just think they are making the same basic error that dualists make–the conceptual distinction between subjectivity and where that subjectivity occurs. (stupid subject-predicate language making it really difficlt to express that idea!)

Some might balk at this and claim that they have no idea what it’s like to be a brain, but I will argue that this is all you know. You may say that you have never seen your brain, so you can only assume it exists, but this is disingenuous. You don’t literally see the light reflecting off of any surface of your brain, to be focused and sent to your brain for processing, but everything you think is your brain. You could use the same argument for the back of your head. You will never directly see the back of your head (this bothers me for some reason…). All of the light reflecting and refracting, entering your eyes, etc happens somewhere else. You are your brain, and so you have intimate knowledge of what it is like to be a brain.

Continuing with Plato’s cave as the basis for explanation, it is the (metaphorical) shadows on the wall–what Plato called the illusion– which are real. In other words, all that we ever really experience is our physical body. Our subjective experience is what it is like to be that body, experiencing the world. There is no separation of mind and body, because your mind is your body.

The looming question of AI (getting less philosophical)

Our brain is a machine. It’s a complicated machine and we don’t understand everything about how it works, but it is a machine. It is unlike computers we build, because we designed our computers to work in a different, logical, way (one that is largely based upon the technological ancestors of computer architecture, such as Jacquard’s Loom; the subject of the book I’m currently reading).

The bottom line is that our mind is a process which exists within matter–neurons and supporting tissue–within the brain. We are fully physical beings, made up of actual material stuff, like chemicals, atoms, and quarks.

There is no soul. There is no supernatural or dualistic spirit or soul here. There is no reason to believe that, and the very idea of dualism is fundamentally broken, in that to even talk about some supernatural substance has to steal from naturalism at very least, and that if it were truly separate, they could not interact (creating a more perplexing problem for dualism that I will not dwell on). Mind, put overly simply, is a process of matter arranged in a complex and delicate way.

And at some point, it may be possible to replicate this type of process artificially. Now, I’m not much of a transhumanist, at least in the sense of being overly optimistic (or pessimistic) about some potential Singularity which may occur at some point in the (near or distant) future, but I do believe that it is technically possible to create intelligence with computers, and I’m fascinated that Ada Lovelace seemed to foresee this possibility 170 years ago.

Learning from mistakes and successes

For those of you who are disappointed that I didn’t make any horribly misogynistic jokes about women and being artificially intelligent, fuck off. For those of you who see that our ability to progress–socially, politically, culturally, and technologically–is hampered by our inability to see past the mundane and conservative elements of our nature, then I gladly embrace you as a collaborator, no matter your gender.

We as a culture have come a long distance, and we have a long way yet to go. We must learn from our errors, yes, but we also must pay attention to when, and how, we succeed. Babbage didn’t succeed with his project to create an Analytical Engine in his lifetime because he was stubborn, unwilling to re-consider his abilities and deficiencies, and because some of the powers to which he was subject were more concerned with politics than the potential of human ingenuity.

Babbage dropped the ball in arguing his case for government funding to Sir Robert Peel (who was, it is agreed, already prejudiced against the project) in complaining about mistreatment and loss of reputation from people in his community. And yes, hindsight makes judging Peel’s refusal more biased for us living in a computer age. But it is worth remembering that rather than take the time to humble his admittedly great intellect and challenge himself to see the problem from another angle, or even to accept Lovelace’s suggestion to be his spokesperson in procuring funding for his work, he never succeeded in creating his Analytical Engine probably because of those faults.

But he left plenty of notes behind, and people used them. The eventual development of the computer was largely dependent upon the work he and his colleagues did in the middle of the 19th century. Imagine how much more, and better, they would have done had they not excluded more voices than they heard. Imagine how much Babbage could have accomplished had he not been so stubborn and, well, conservative (I realize the term ‘conservative’ in this context, is anachronistic).

Further, imagine how much we can accomplish if we all start being inclusive in all aspect of our social, cultural, and technological pursuits. Or maybe, it will take the Singularity to reach such inclusiveness, since so many people seem unable to escape their own tribalistic nature. And then ironically accuse others of tribalism. Much like the inseparability of mind and body, the great rift is the community. The problem, of course, is differentiating between the healthy tissue and the cancer.

When a community has some individuals with an un-drifting mission to merely replicate and spread with more concern for freedom than safety, while other parts listen to the world in order to to decide what to do and how to do it better, I think that is called brain cancer.

2 thoughts on “Feminism and Artificial Intelligence”

Comments are closed.